Agile team Benchmark for a Turkish Telecom Company

The Issue at hand

The company has many teams that have adopted an agile way of working, but these teams only measure story points to estimate and track velocity. As Story Point metrics are very team-specific, and measurements vary highly between teams, it is impossible to aggregate these metrics into meaningful management information. Still, higher management expressed an interest in obtaining more insights into the productivity of the teams and the quality of the products the teams develop and maintain.

The Service Provided

We carried out an Agile Team Benchmarking project to understand the productivity, cost efficiency, delivery speed, and process quality of the team and the code quality of the product they develop and maintain, and how these metrics compare to industry peers. The approach used was the following:

One team was selected, which was the scope of the measurement.

Of all these teams, a set of sprints was selected to measure.

A certified FPA analyst measured the functional size delivered by these teams in these sprints.

In the meantime, data was collected on these sprints: e.g., effort hours spent per function, defects found, and resolved, etc.

Using the data and the functional size delivered, the following metrics were calculated:

Productivity (hours spent per FP delivered).

Cost Efficiency (Cost spent per FP delivered).

Delivery Speed (FP delivered per calendar month).

Process Quality (Defects per FP).

These metrics were benchmarked against carefully selected peer groups based on factors such as the technology used, industry sector, team size, etc.

In addition, a code quality measurement was carried out to understand the maintainability, performance, security, robustness, and transferability of the code.

The outcomes achieved

All results were reported to both management and the teams, where the teams also got an actionable plan to improve the metrics and code quality. The benchmark showed that the Team performance was quite good, higher than the peer group. Interestingly enough, also the code quality of the custom code of the application was very good and very few critical violations of important ISO 5055 and ISO 25010 rules were present. Those that were present were easy to resolve in relatively little time.

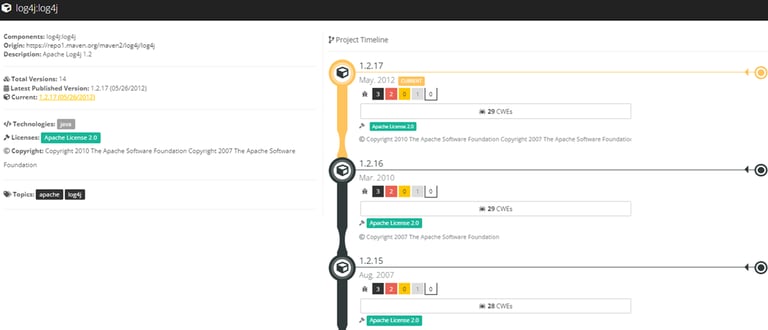

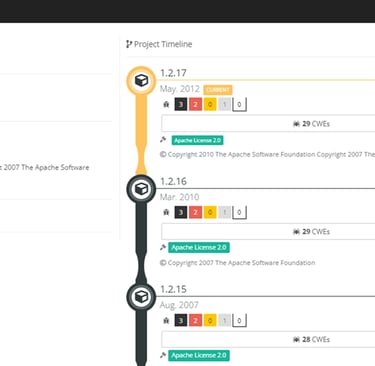

However, it turned out that the application still contained a lot of risk, as it contained many out-of-date and very risky open-source components. As it turns out, the company did not have an open-source component management process in place, and the components used were never updated or replaced by newer ones. The automatic code analysis showed all the Open-Source components used in the code, the version used, all risks in that version (known vulnerabilities and license risks), and a timeline of newer available versions, including the vulnerabilities and license risks in those components.

The use of older open-source components is risky in many ways. Apart from obvious potential security risks, there may be license risks. Some licenses can even force you to release to complete application code to the community. In addition, software obsolescence means that older open-source components may not be maintained any longer by anyone. Some may even have been written in obscure languages nobody knows any longer. This means that if a problem arises in such a component, it is nearly impossible to fix it yourself, and nobody else is going to fix it. Unfortunately, we have seen this in many organizations, where too little attention is given to the updates of open-source, which can actually put entire companies at risk, for instance, to a ransomware attack.

The results of the Agile Team Benchmark project were very helpful for the company, as it identified an important 'blind spot' in their application development processes, which of course, they addressed immediately.

The main deliverables were:

A Management presentation with the major findings, observations, and recommendations on site at the headquarters of Turkcell in Istanbul. Team meeting with the team to show the most important findings and to explain the results and the improvement plan.

A Management Health dashboard with the scores on the health factors, the critical violations found, and metrics like technical debt, technical size, functional size, etc.

An Engineering dashboard with the (critical) violations found in the code, with their exact location, the reason these are considered (critical) with reference to the source standard or best practice, and how to remediate the violation.

An Architectural Blueprint in Imaging, where all connections between the components, frameworks, layers, etc., are made visual to facilitate the understanding of how the application works.

An overview of the used open-source third-party components, the versions of these, the known vulnerabilities and license risks in these versions, and recommendations to upgrade the components with high risk to newer and safer versions.

An improvement plan with concrete actions to improve the quality of the application even further, and to remediate the very few critical violations that were in the code, including a simulation of the results in the scores after carrying out the actions, including the estimated effort to carry out the plan.